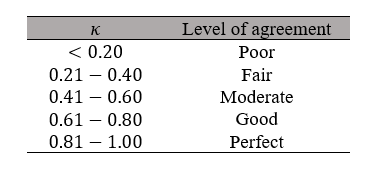

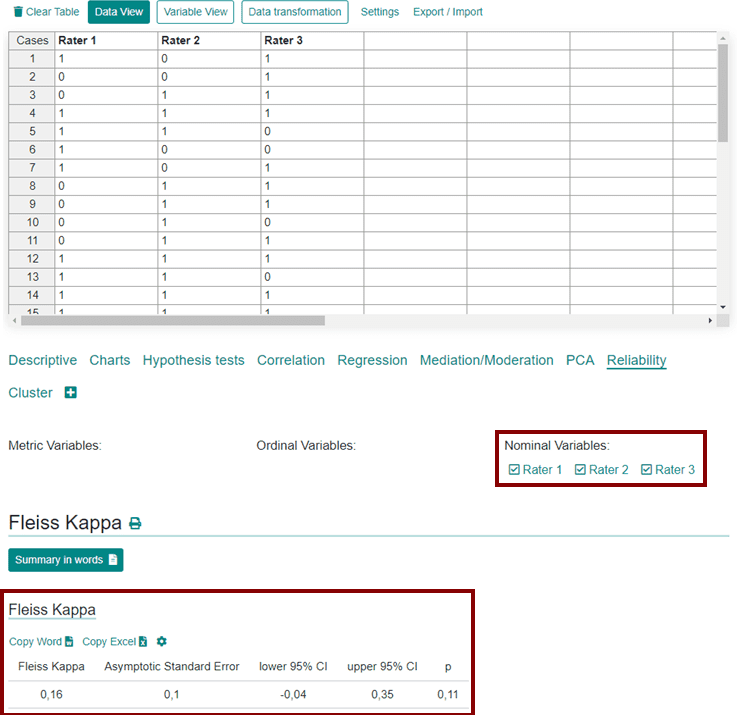

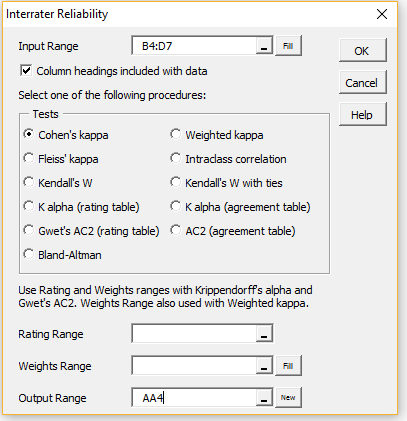

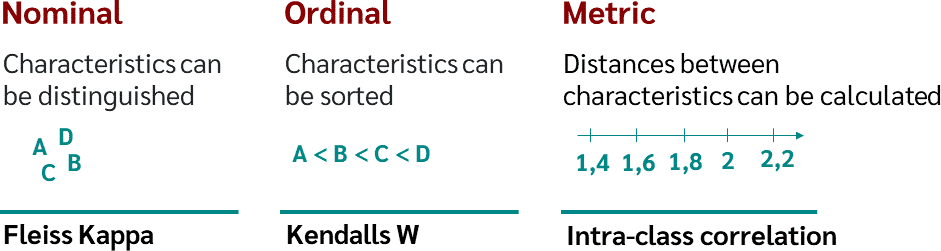

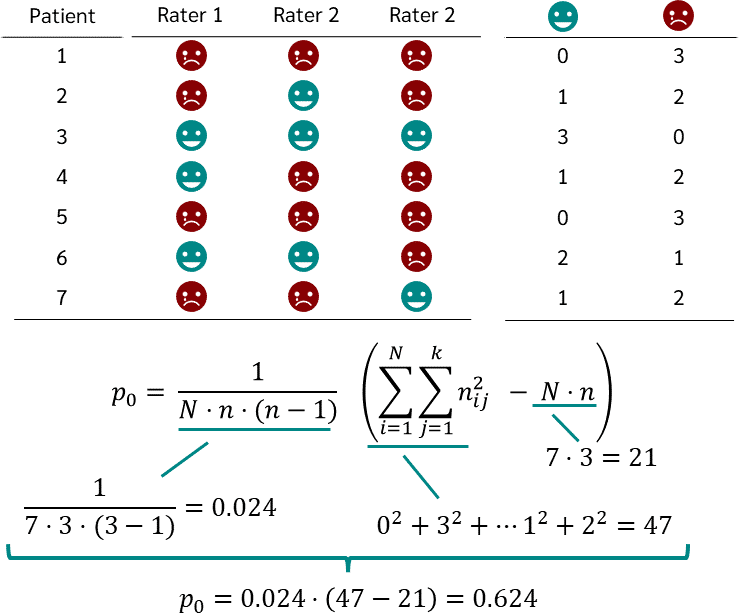

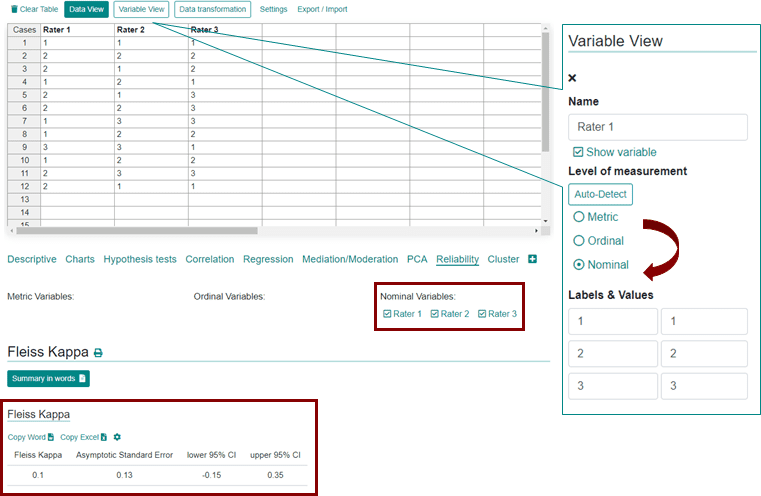

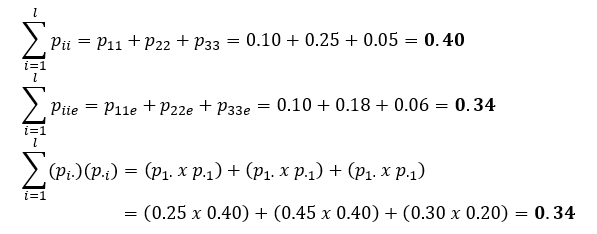

Fleiss' multirater kappa (1971), which is a chance-adjusted index of agreement for multirater categorization of nominal variab

GitHub - efi/fleiss-kappa: A tiny, MIT-licensed java implementation of the "Fleiss Kappa" measure for the inter-rater reliability of categorical ratings represented as either int[][] or long[][]

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant | Medium